If you're not testing, you're not improving.

Regardless of how often you blog or produce other content, the home, product, service, other sales and landing pages of your website will be predominantly static.

Static content by definition gives you no insight as to how effective it is versus other possible alternatives. Without insight like this, you've no way to confidently or systematically improve the performance of the static pages of your site. Given that even a minor change to a page can have a pronounced impact on performance, if you are not testing alternatives you are potentially missing out on huge gains.

Why do I need to A/B test?

Sure you could change a static element of your website and compare its performance with the previous version, but this is neither efficient or effective as the test takes place over two different time periods.

A/B testing describes the process of testing two or more different versions of an element of your website against one another to identify which one performs best. Basically, visitor 1 to your website is shown version A and visitor 2 is shown version B, and so on, A, B, A, B.

A/B testing is the best and most reliable way to test variations of webpages, identify the best performing variations and iteratively improve your websites' overall performance.

When should I A/B test?

If website performance is important to your business, you would ideally be A/B testing all the time. There are many, many elements that you can apply an A/B test to and there will always be room for improvement in your website's performance.

Perhaps the only time you should not test is when you know that website traffic is going to be very different from the norm. Christmas for example, when reduced traffic levels would result in less conclusive tests.

How do I do A/B testing?

An A/B test has three basic parameters; a variable, a measurement and a duration:

- The variable is the element of which you will test variations

- The measurement is metric that you will use to determine the winning variation

- The duration is the length of time you will let the test run before declaring a winner

Variables

In an A/B test, two or more variations of one variable are tested against one another.

I general, only one test should be performed on any one variable at a time. We also recommend that you only perform one test on any one page at a time. This way, you can be sure that each test will produce a result with sufficient statistical confidence (i.e. the reliability of the result) to be conclusive - allowing you to permanently implement the winner and move on to the next test.

They types of variable you can test are really wide ranging and include at least:

- Headline copy

- Body copy

- Hyperlink copy

- Images

- Page layouts

- Styles, fonts and colours

- Form fields

- Calls to action

Headlines and calls to action are among the most powerful variables to test, so be sure to include these in your plans.

Measurement

The correct measurement for each test depends on the variable being tested, which page it is on and what role both play in the customer journey.

On your home for example you might test several headline variations and measure their performance in terms of bounce rate - lower being better of course. Meanwhile, on a landing page you might test variations of the call to action, in which case, conversions will almost certainly be the most appropriate metric to measure.

Duration

The duration of your test should be whatever it takes for you to get statistical confidence in the result. We don’t expect you to calculate confidence of course, but you do need to ask yourself 'If I perform this test again, for the same duration, another time, would I be likely to get a very different result'. If the answer is yes, then you probably need to change the duration.

By running A/B tests for a month (or more) in a month when website traffic is 'normal' you remove much of the deviation that could come from different times of day, different days of the week and different times of the month (like month-end and pay day). This should be enough to make most test results conclusive and reliable.

Your test rig

There are a number of ways you can run A/B tests on your site.

Paid solutions

Paid solutions include Optimisely, Wingify and Unbounce - the latter being more aimed at landing page optimisation. Each offers easy-to-use, visual A/B test creation and could be perfect for you if you don’t have access to development resources or your website's back-end.

The free solution

If you do have the resources or the skills however, you can do as we do and create your A/B tests for free in Google Analytics.

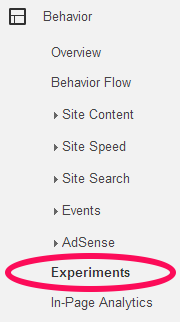

In Analytics, A/B tests are called 'Experiments' and you will find them under the 'Behaviour' menu.

Running Google Behaviour Experiments on your site calls for the following:

- Google Analytics tracking operational on your website

- Visible pages for each variation being tested

- An additional 'experiment code' in the head of the original page - also known as the control page

During the set-up process you will be asked to:

- Name your test

- Specify the objective - the metric to be measured

- Set the percentage of traffic to test - normally this should be 100% to achieve confidence as quickly as possible

- Enter the URLs of the original page and the test variations

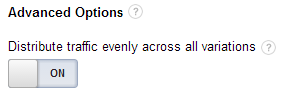

After you set the percentage of traffic to test, expand the Advanced Options and change the 'Distribute traffic evenly across all variations' settings to Yes. If you don’t do this, Google will determine how much traffic each variation receives and your results will be biased towards one. For a fair test you want each variation to receive the same amount of traffic.

Finally you will be give the experiment code to install on the original page and you will be good to go.

Finding the winner

After the test has run for the duration you opted for, head back into Google Analytics or your paid solution and check the results of your test. The winner should be obvious.

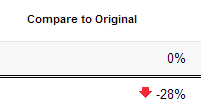

In Google Analytics the performance of each variation is compared to the original and the result display as a percentage alongside a green or a red arrow.

Once you know which variation was the winner, add it to the original and plan your next test.